Har file viewer

If this analysis does not resolve the problem, open a support ticket and upload the HAR file or attach it to your email so that Databricks can analyze it.

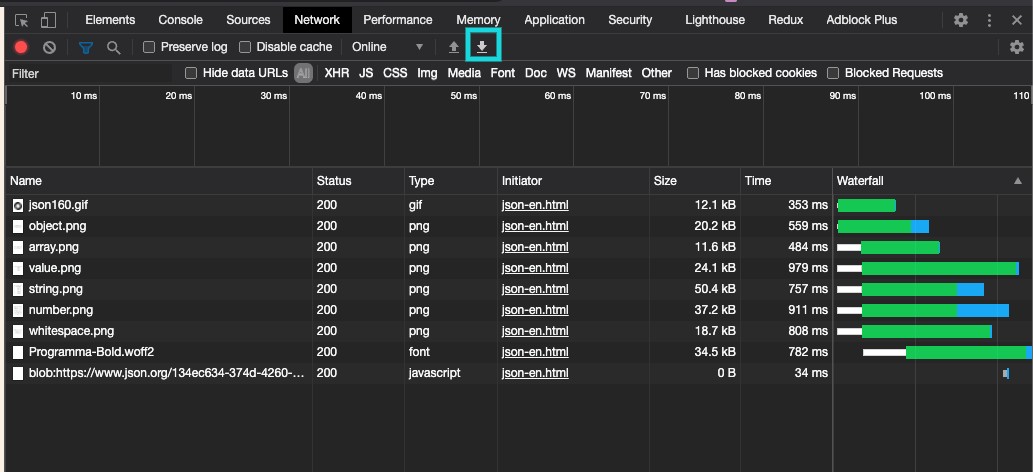

Select Network tab and click Preserve log and. Press F12 of keyboard, then Chrome developer tool shows up.

#HAR FILE VIEWER HOW TO#

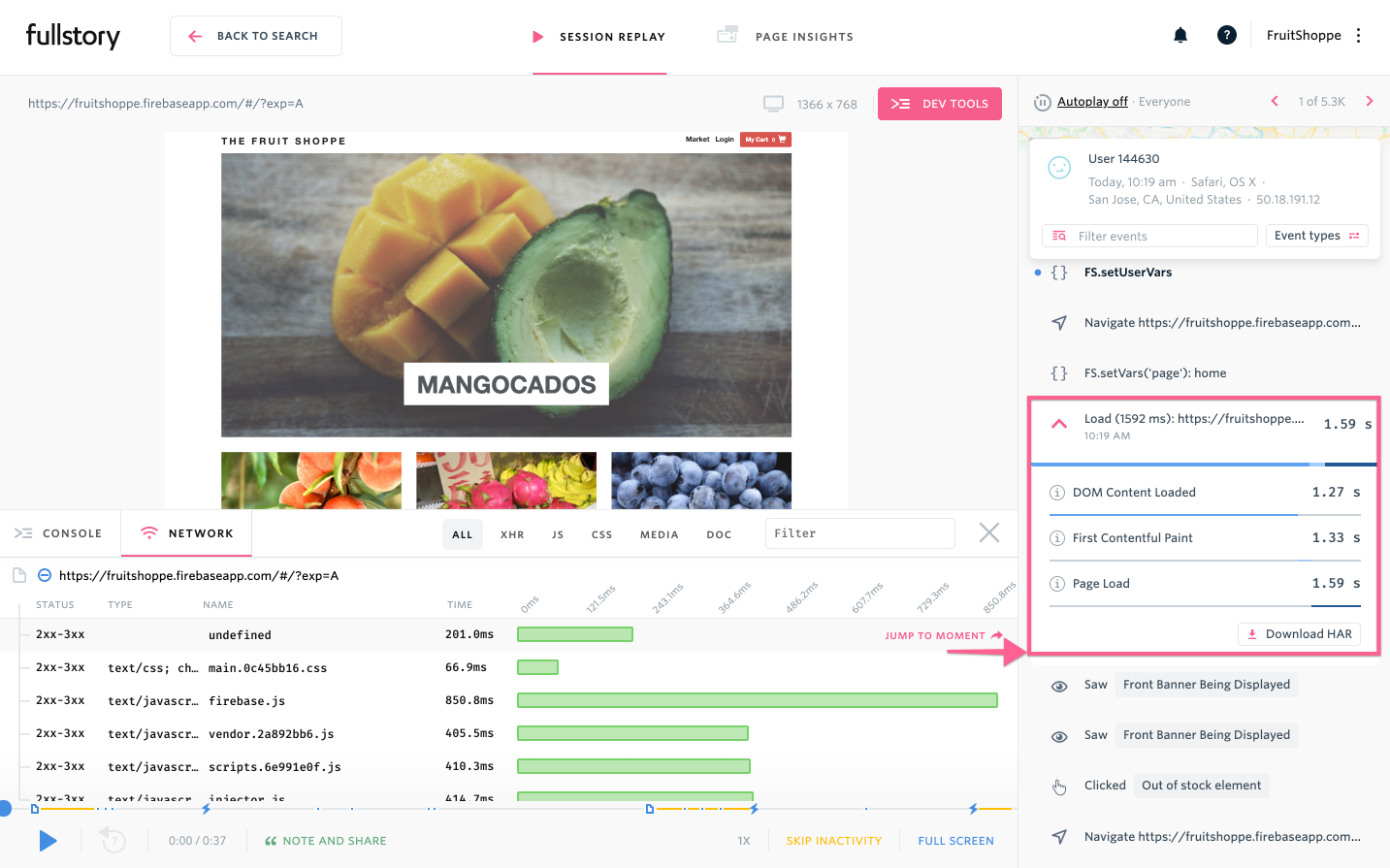

This blog explains how to collect network trace and how to view it remotely. In the panel at the bottom of your screen, select the Network tab. Instead Chrome developer tool is used to capture network trace that can only be saved as.In the Chrome menu bar, select View > Developer > Developer Tools.Open Google Chrome and go to the page where the issue occurs.For other browsers, see G Suite Toolbox HAR Analyzer. A universal file viewer is a program you can use to open hundreds of different types of files (depending on the format). This tool helps you analyze the logs and identify the exact API and the time taken for each request.

In most cases, you will need the assistance of Databricks Support to identify and resolve issues with Databricks user interface performance, but you can also analyze the logs yourself with a tool such as G Suite Toolbox HAR Analyzer. harSourceFolder2 : Where the initial set of small files are stored. Testing: To understand the behavior of the HAR, we try following example. In order to troubleshoot this type of problem, you need to collect network logs and analyze them to see which network traffic is affected. Hadoop archives is one of the methodology which is followed to reduce the load on the Namenode by archiving the files and referring all the archives as a single file via har reader. User interface performance issues typically occur due to network latency or a database query taking more time than expected. With this information, we can identify bottlenecks (ex.what is the slowest request of that URL), find low-hanging fruit (ex.asset compression is one flag away in your build system tool) and prioritize tasks in order to improve performance on our pages.The Azure Databricks user interface seems to be running slowly. Request timing information (ex.: waiting and downloading times).Protocols being used in the page (http 1.1, http 2, h3-29).We can extract a lot of valuable information from HAR files, such as: and we can use it in the HAR Viewer for performance analysis. Next, the generated HAR is stored in page.har.

If you are curious about how the fromLog function works, I would recommend reading the package source-code, in special one of their tests. In line 12, we use the fromLog function to build the HAR object, which we store in the file system in the following line. This is what we are storing in line 10 and this is the array of raw events that chrome-har-capturer needs to generate a HAR file. What I didn’t mention was the function also keeps the artifacts created by the DevTools protocols. In my other posts, I shared how to use the lighthouse() function to get all kinds of information: from web vitals metrics to page screenshots. 1 const lighthouse = require( 'lighthouse') Ģ const chromeLauncher = require( 'chrome-launcher') ģ const = await lighthouse(url, options) ġ1 12 const har = await fromLog(url, defaultPass) ġ3 writeFileSync( 'page.har', JSON.stringify(har))